Image generated by Deeptech Times using ChatGPT

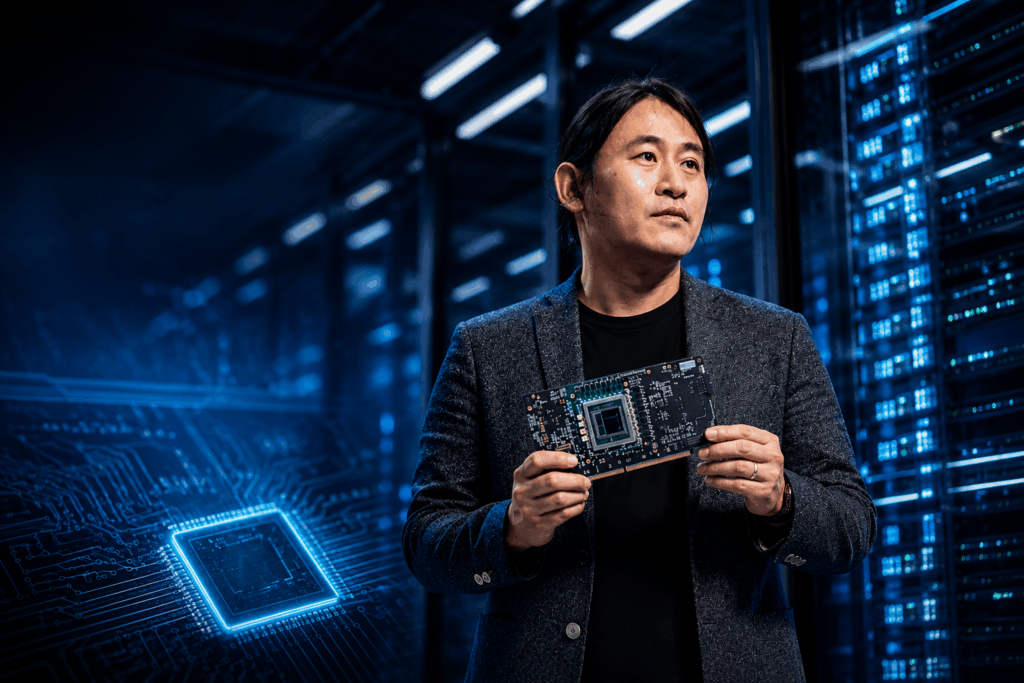

For the better part of the AI boom, one assumption has gone largely unquestioned: that the future of AI will be built on the back of GPUs. But if you listen closely to June Paik, founder and CEO of FuriosaAI, that assumption is already beginning to fracture.

FuriosaAI develops next-generation AI semiconductor solutions focused on high-performance, energy-efficient neural processing units (NPUs) designed specifically for AI inference workloads.

Unlike traditional GPUs built for broader parallel computing tasks, FuriosaAI’s AI-native architecture is optimised to run large language models and advanced AI applications with significantly lower power consumption and higher efficiency. Its NPUs are aimed at enabling scalable and sustainable AI infrastructure for enterprises, cloud providers and sovereign AI initiatives across industries.

In an exclusive interview with Deeptech Times in Singapore, Paik lays out a thesis that is both technical and geopolitical in nature: the next phase of AI will not be defined by more powerful GPUs, but by a structural shift towards AI-native silicon, purpose-built architectures designed not for general computation but for the realities of modern AI workloads.

And in that shift, the stakes extend far beyond performance. They reach into energy sustainability, national strategy and the very shape of the global AI ecosystem.

The GPU ceiling and why it matters now

At the heart of Paik’s proposition is a simple observation: GPUs were never designed for AI in the first place.

Originally built for graphics rendering, GPUs evolved into highly parallel, general-purpose compute engines. That flexibility helped fuel the deep learning revolution. But it is also increasingly becoming their limitation.

“GPU actually started as a rendering device,” Paik explains. “It can run mining. It can run scientific computing. It’s essentially a general-purpose parallel machine. That means there are also fundamental inefficiencies when you try to use it for AI workloads.”

The inefficiency he refers to is not just raw compute but also energy. In Paik’s framing, the real constraint in AI is no longer performance but power efficiency at scale. As models grow larger and inference becomes continuous, the cost of energy becomes the dominant factor in AI economics.

His analogy is telling: “You can think of it like the difference between a gasoline car and an electric car. Both achieve transportation, but the underlying technology and efficiency is completely different.”

In this view, AI-native chips are not incremental improvements. They represent a paradigm shift: streamlined architectures that remove unnecessary components and optimise exclusively for AI.

The inference economy is driving the shift

This architectural rethink is arriving at a critical moment. The AI industry is transitioning from a training-centric model to what Paik describes as an inference-driven economy. Training may capture headlines, but inference running models in real-world applications is where scale, cost and energy pressures collide.

“The token cost is exploding right now,” Paik notes. “Agent decoding and everything, we need much more sustainable and efficient solutions.”

This is where FuriosaAI is positioning itself as an enabler of efficient, production-scale AI deployment, rather than a challenger in brute-force compute. Yet the challenge is far from trivial. Unlike fixed workloads such as Bitcoin mining, AI workloads are constantly evolving.

“AI workloads are not stable,” Paik says. “Models keep evolving, so the challenge is to build an architecture that is optimized but still flexible enough to support frontier models.”

That tension, between optimisation and adaptability, may well define the next decade of semiconductor innovation.

The dawn of heterogeneous data centres

If GPUs are no longer the sole centre of gravity, what replaces them? Paik points to an emerging reality: the heterogeneous data centre.

In this model, different types of chips, such as GPUs, TPUs and inference accelerators, coexist; each optimised for specific workloads. Crucially, this is not a future scenario. It is already happening.

“Google Gemini is running mostly on TPUs. Anthropic models are running on AWS chips. Chinese models are running on domestic hardware,” Paik says. “It’s already diversifying.”

What’s changing is accessibility. Today, only frontier AI labs can orchestrate such complexity. But as tools mature, this heterogeneity will “trickle down” to enterprises. This implies that the AI stack is no longer controlled by a single architecture or a single vendor. It becomes a contested, multi-layered ecosystem.

AI chips as instruments of power

This technological shift is unfolding against a backdrop of intensifying geopolitical tension. AI chips have become strategic assets subject to export controls, supply chain restrictions and national industrial policies. In this context, companies like FuriosaAI occupy a unique position.

“Korea is somewhat neutral,” Paik observes. “Many countries want to diversify their chip supply rather than rely on a single source.”

This idea of diversification is central to the emerging concept of sovereign AI infrastructure where nations seek not just access to AI, but control over its foundational layers. For countries without domestic chip capabilities, the strategy is not self-sufficiency but optionality. And that creates space for new entrants.

“There are very few countries that can build AI chips – US, China, Korea,” Paik notes. “But many nations want alternatives.”

Capability as the real barrier, not capital

Despite the apparent opportunity, Paik is clear-eyed about the difficulty of competing in AI semiconductors.

“It’s very hard to make these chips,” he says. “We’ve worked on this technology for almost nine years.”

The barrier to entry is not just financial but deeply technical. Designing competitive AI silicon requires expertise across architecture, software stacks, manufacturing partnerships and ecosystem integration.

Furiosa’s early traction with customers like LG and Samsung serves as validation not just of its product, but also of its ability to meet enterprise-grade requirements.

“These companies have a very high bar,” Paik says. “Winning them means the product is commercially viable.”

Singapore, Asia and the new AI geography

Geography, too, is being redefined in this new AI order. For FuriosaAI, Singapore plays an instrumental role as a strategic hub.

“We identify Singapore as the hub of the APAC region,” Paik says, noting efforts to build local teams and partnerships.

From there, the company is expanding into Southeast Asia, with projects underway in Malaysia and Indonesia, while also eyeing opportunities in India’s emerging AI infrastructure buildout. According to Paik, AI is no longer a US-China duopoly. It is becoming a multi-polar ecosystem with regional hubs playing increasingly strategic roles.

Redefining AI success

For Paik, success is not simply about revenue or market share, although FuriosaAI is targeting billion-dollar scale within the next few years. Instead, it is about enabling a systemic transition.

“We want to lead the next generation of AI computing,” he says. “To make the transition to more sustainable AI.”

He draws a parallel with the electric vehicle revolution where companies like Tesla did not just compete with incumbents but redefined the category itself.

In AI, that redefinition may hinge on a deceptively simple idea: that efficiency, not just scale, will determine the winners.

The AI industry has spent the last decade chasing scale. But as Paik’s perspective suggests, the next decade may be defined by something else entirely.

If GPUs were the engine of the first AI wave, AI-native silicon may well power the next. And in that transition, the question is no longer just who builds the most powerful models but who builds the most viable infrastructure to run them.

FuriosaAI is betting that the answer lies not in more of the same but in a fundamentally different approach to computing. The rest of the industry may soon be forced to decide whether it agrees.